Sensor fusion: Unterschied zwischen den Versionen

(→Mapping and localization (SLAM)) |

Gimate (Diskussion | Beiträge) (→Simulation: Link korrigiert) |

||

| (74 dazwischenliegende Versionen von einem anderen Benutzer werden nicht angezeigt) | |||

| Zeile 1: | Zeile 1: | ||

| + | =Abstract= | ||

| + | NOTE: Everything under development here. Just showing ideas! :-) | ||

| + | |||

| + | |||

| + | The actual state of the robot can be described completely by: | ||

| + | * speed (m/s) | ||

| + | * direction (degree) | ||

| + | * position (x,y - meter) | ||

| + | |||

| + | All sensors (gyro, acceleration, compass, odometry, perimeter signal strength, GPS, ...) can deliver certain information about the robot's state. However, each sensor is noisy (added with errors). | ||

| + | Sensor fusion is used to eliminate errors of each individual sensor. Each sensor gets a confidence weight that is automatically adjusted after reading the sensor by comparing its plausibility with the fusion result. | ||

| + | |||

| + | <gallery> | ||

| + | File: ardumower_localization.png | ||

| + | </gallery> | ||

| + | |||

=Sensor errors= | =Sensor errors= | ||

| − | = Odometry sensor= | + | |

| + | * Odometry: accumulation error (e.g. 15cm per meter), update rate: 10-20 Hz | ||

| + | * Gyro: accumulation error (e.g. 0.03 degree per second), update rate: 10-20 Hz | ||

| + | * Compass: noise (e.g. 1 degree), update rate: 10-20 Hz | ||

| + | * GPS: noise (e.g. 2m), update rate: 1-5 Hz | ||

| + | |||

| + | = Odometry sensor error= | ||

<gallery> | <gallery> | ||

| − | File: odometry_plot.jpg | + | File: odometry_plot.jpg | Odometry sensor error |

</gallery> | </gallery> | ||

| Zeile 14: | Zeile 36: | ||

The course can be permanently corrected by the compass sensor, the position by recalibration on the perimeter wire. | The course can be permanently corrected by the compass sensor, the position by recalibration on the perimeter wire. | ||

| − | =Sensor fusion= | + | =Sensor fusion (EKF)= |

| + | A Kalman or Extended Kalman Filter (EKF) filter can be used for sensor fusion. Sensor fusion helps improving precision of course and distance measurements by fusion of all available sensors. | ||

| + | |||

| + | Gyro => Rotation speed delPhi => | ||

| + | Course(GPS) => Course phiGPS => Kalman => Course phi => | ||

| + | Odometry => Distance Sl, Sr => Kalman => Position x,y, Course phi | ||

| + | |||

| + | Note: This estimated position will need to be corrected by an absolute position estimation, e.g. perimeter field strength (and a particle filter) or position recalibration at perimeter border. | ||

| + | |||

| + | =Sensor fusion (Gyrodometry)= | ||

| + | The idea of 'Gyrodometry' is to compare delta phi of gyro and odometry. If it is higher than a certain threshold, use gyro delta phi, otherwise odometry delta phi. | ||

| + | |||

| + | if (deltaPhiGyro - deltaPhiOdo) > deltaThres | ||

| + | phi = phi + deltaPhiGyro * T | ||

| + | else | ||

| + | phi = phi + deltaPhiOdo * T | ||

| + | |||

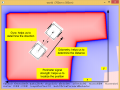

| + | =Position recalibration at perimeter border= | ||

| + | |||

| + | Because odometry error increases over time, the robot needs to periodically recalibrate its exact position (get the error to zero). This works by detecting its exact position on the perimeter wire (using cross correlation). | ||

| + | |||

| + | # Learn mode: robot completely tracks perimeter wire once and saves course (degree) and distance (odometry ticks) in a list (perimeter tracking map). | ||

| + | # Position detection: robot starts tracking the perimeter wire at arbitrary position until a high correlation with a subtrack of the perimeter tracking map is found. There it can stop tracking and knows its position on its perimeter tracking map. | ||

| + | |||

| + | <gallery> | ||

| + | File: ardumower_tracking_pos_sync.png | Position recalibration by driving perimeter wire | ||

| + | File: Ardumower pos recalibration2.png | Position recalibration by driving perimeter wire (another visualization) | ||

| + | File: Ardumower_border_angle.png | Position recalibration by estimating border angle | ||

| + | File: Perimeter_tracking_one_coil.png | One coil perimeter tracking | ||

| + | </gallery> | ||

=Mapping and localization (SLAM)= | =Mapping and localization (SLAM)= | ||

| + | |||

The perimeter magnetic field could be used as input for a robot position estimation. However, as both the magnetic field map and the robot position on it is unknown, the algorithm needs to calculate both at the same time. Such algorithms are called 'Simultaneous Localization and Mapping' (SLAM). | The perimeter magnetic field could be used as input for a robot position estimation. However, as both the magnetic field map and the robot position on it is unknown, the algorithm needs to calculate both at the same time. Such algorithms are called 'Simultaneous Localization and Mapping' (SLAM). | ||

| Zeile 26: | Zeile 78: | ||

* magnetic field map (including perimeter border) | * magnetic field map (including perimeter border) | ||

* robot position on that map (x,y,theta) | * robot position on that map (x,y,theta) | ||

| + | |||

| + | <gallery> | ||

| + | File: whatisslam.png | What is SLAM? | ||

| + | </gallery> | ||

Example SLAM algorithms: | Example SLAM algorithms: | ||

* [http://grauonline.de/alexwww/ardumower/particlefilter/particle_filter.html Particle filter]-based SLAM plus Rao-Blackwellization: model the robot’s path by sampling and compute the 'landmarks' given the poses | * [http://grauonline.de/alexwww/ardumower/particlefilter/particle_filter.html Particle filter]-based SLAM plus Rao-Blackwellization: model the robot’s path by sampling and compute the 'landmarks' given the poses | ||

| + | |||

| + | Example implementation: | ||

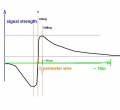

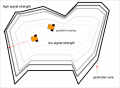

| + | The idea is to use a particle filter that constantly generates a certain amount of 'guesses' (particles, e.g. N=50) where the robot can be (depending on the last position, control input, speed sensor and heading sensor measurements). Each 'particle' is evaluated by the perimeter field measurement... | ||

| + | |||

| + | <gallery> | ||

| + | File: Perimeter_signal_strength.jpg | strength precision is low in the center | ||

| + | File: Perimeter_gradient.png | same strenght values are located on rings | ||

| + | File: Ardumower_perimeter2_test.jpg | real measurements over a complete lawn | ||

| + | File: Ardumower_slam_idea.png | complete SLAM idea | ||

| + | </gallery> | ||

| + | |||

| + | ... and the particle 'weight' is adjusted based on that. Bad particles are replaced by new particles with new guesses near the good guesses. This constantly produces particles with high probability of the robot's location. | ||

| + | |||

| + | <gallery> | ||

| + | File: Ardumower_slam.png | SLAM (using magnetic field sensor) | ||

| + | File: Particlefilter.png | SLAM (using classic LIDAR sensor) | ||

| + | File: Uwes_Perimeter_Ideas.jpg | SLAM (using 4 coils) | ||

| + | </gallery> | ||

| + | |||

| + | =SLAM simulation= | ||

| + | |||

| + | <gallery> | ||

| + | File: Ardumower_perimeter_magneticfield.png | Magnetic field localization using partcle filter | ||

| + | File: Particlefilter1.png | Start of particle filter | ||

| + | File: Particlefilter2.png | Particle filter after few robot movements | ||

| + | File: Particlefilter3.png | Particle filter following robot | ||

| + | File: Particlefilter4.png | Particle filter position error below 23 cm | ||

| + | </gallery> | ||

| + | |||

| + | # The simulator code can be found in [https://code.google.com/p/ardumower/source/checkout SVN] | ||

| + | # The simulator IDE+compiler+precompiled libs (CodeBlocks) can be downloaded [https://drive.google.com/file/d/0B90Bcwohn5_HNWlzbjNGSGZtYXc/view?usp=sharing here] - Extract the .zip to 'C:\codeblocks' and run 'sim.cbp' | ||

| + | |||

| + | |||

| + | Videos: | ||

| + | [https://www.youtube.com/watch?v=wLzi5HJiZoQ&feature=youtu.be Magnetic field localization using particle filter] | ||

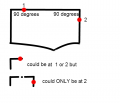

=Lane-by-lane mowing= | =Lane-by-lane mowing= | ||

| − | In the lawn-by-lane mowing pattern, the robot uses a fixed course. When hitting an obstacle, the new course is added by 180 degree, so that the robot enters a new lane. | + | In the lawn-by-lane mowing pattern, the robot uses a fixed course (accurate gyro + compass correction). When hitting an obstacle, the new course is added by 180 degree, so that the robot enters a new lane. |

| + | |||

| + | The robot always starts at the borders at an arbitrary choosen course, mowing lane by lane of a maximum length (distance determined by odometry). The maximum length ensures that the odometry position error does not get too high. At the perimeter, the robot can reduce the error to zero again (one axis). The arbitrary starting course ensures that even small gaps on the lawn are mown completely after a 2nd mowing session (where the robot started at another arbitrary course). | ||

| + | |||

<gallery> | <gallery> | ||

File:Turning180degree.png | Robot turns 180 degree and enters a new lane | File:Turning180degree.png | Robot turns 180 degree and enters a new lane | ||

| + | File: lanebylane1.png | Mowing segment by segment | ||

| + | </gallery> | ||

| + | |||

| + | The maximum allowed yaw error (course error) can computed like this: | ||

| + | |||

| + | yaw error=arcsin(lane width error/lane length) | ||

| + | |||

| + | Assuming a lane length of 10m, and a lane width error of +/- 0.1m, the maximum allowed yaw error is: | ||

| + | yaw error=arcsin(0.1m/10m) = 0.5 degree | ||

| + | |||

| + | Assuming that robot moves at 0.4m/sec, the actual yaw error can be computed like this: | ||

| + | actual time needed = 10 meters / 0.4m/sec = 25 seconds | ||

| + | |||

| + | Actual yaw error (assuming gyro noise 0.005 degree/sec): | ||

| + | actual yaw error = 0.005 degree/sec * 25 seconds = 0.125 degree | ||

| + | |||

| + | = Sensor logging = | ||

| + | For PC data analysis, algorithm modelling and optimization, you can collect robot sensor data using pfodApp like this: | ||

| + | |||

| + | # Using your Android pfodApp, connect to your robot and choose 'Log sensors'. The logged sensor data will be displayed. Click 'Back' to stop logging (NOTE: for ArduRemote, press Android menu button before and choose 'Enable logging' to enable file logging). | ||

| + | # Connect your Android phone to the PC, if being asked on the phone choose 'Enable as USB device', so you phone shows as a new Windows drive on your PC. | ||

| + | # On your PC, launch Windows Explorer and choose the new Android drive, browse to the 'pfodAppRawData' folder (for ArduRemote: 'ArduRemote' folder), and copy the data file to your PC (you can identify files by their Bluetooth name and date). | ||

| + | |||

| + | <gallery> | ||

| + | File: Pfodapp_start.png | 1. Start pfodApp | ||

| + | File: Pfodapp_log_sensors.png | 2. Choose 'Log sensors' | ||

| + | File: Pfodapp_log_sensors_exit.png | 3. Choose phone back button or 'Exit' | ||

| + | File: Android_enable_usb device.png | 4. Connect to your PC via USB, choose 'Enable as USB device' | ||

| + | File: log_sensor.png | 5. Using Windows explorer, browse to folder 'pfodAppRawData' on external Android drive to access sensor log file | ||

| + | </gallery> | ||

| + | |||

| + | = Simulation = | ||

| + | Here's [http://www.grauonline.de/alexwww/ardumower/sim/mower.html] a simulation of localization using odometry sensors. | ||

| + | |||

| + | <gallery> | ||

| + | File: ardumower_sim.jpg | Robot mower simulator | ||

</gallery> | </gallery> | ||

Aktuelle Version vom 28. April 2017, 14:32 Uhr

Inhaltsverzeichnis

Abstract

NOTE: Everything under development here. Just showing ideas! :-)

The actual state of the robot can be described completely by:

- speed (m/s)

- direction (degree)

- position (x,y - meter)

All sensors (gyro, acceleration, compass, odometry, perimeter signal strength, GPS, ...) can deliver certain information about the robot's state. However, each sensor is noisy (added with errors). Sensor fusion is used to eliminate errors of each individual sensor. Each sensor gets a confidence weight that is automatically adjusted after reading the sensor by comparing its plausibility with the fusion result.

Sensor errors

- Odometry: accumulation error (e.g. 15cm per meter), update rate: 10-20 Hz

- Gyro: accumulation error (e.g. 0.03 degree per second), update rate: 10-20 Hz

- Compass: noise (e.g. 1 degree), update rate: 10-20 Hz

- GPS: noise (e.g. 2m), update rate: 1-5 Hz

Odometry sensor error

After a time, the odometry's error accumulates, and the course (Degree) and position (x/y) are getting unprecise.

Typical errors in outdoor condition (wet lawn, high slope):

- Distance: 20cm per meter

- Course: 10 degree per meter

The course can be permanently corrected by the compass sensor, the position by recalibration on the perimeter wire.

Sensor fusion (EKF)

A Kalman or Extended Kalman Filter (EKF) filter can be used for sensor fusion. Sensor fusion helps improving precision of course and distance measurements by fusion of all available sensors.

Gyro => Rotation speed delPhi => Course(GPS) => Course phiGPS => Kalman => Course phi => Odometry => Distance Sl, Sr => Kalman => Position x,y, Course phi

Note: This estimated position will need to be corrected by an absolute position estimation, e.g. perimeter field strength (and a particle filter) or position recalibration at perimeter border.

Sensor fusion (Gyrodometry)

The idea of 'Gyrodometry' is to compare delta phi of gyro and odometry. If it is higher than a certain threshold, use gyro delta phi, otherwise odometry delta phi.

if (deltaPhiGyro - deltaPhiOdo) > deltaThres phi = phi + deltaPhiGyro * T else phi = phi + deltaPhiOdo * T

Position recalibration at perimeter border

Because odometry error increases over time, the robot needs to periodically recalibrate its exact position (get the error to zero). This works by detecting its exact position on the perimeter wire (using cross correlation).

- Learn mode: robot completely tracks perimeter wire once and saves course (degree) and distance (odometry ticks) in a list (perimeter tracking map).

- Position detection: robot starts tracking the perimeter wire at arbitrary position until a high correlation with a subtrack of the perimeter tracking map is found. There it can stop tracking and knows its position on its perimeter tracking map.

Mapping and localization (SLAM)

The perimeter magnetic field could be used as input for a robot position estimation. However, as both the magnetic field map and the robot position on it is unknown, the algorithm needs to calculate both at the same time. Such algorithms are called 'Simultaneous Localization and Mapping' (SLAM).

Input to SLAM algorithm:

- control values (speed, steering)

- observation values (speed, heading, magnetic field strength)

Output from SLAM algorithm:

- magnetic field map (including perimeter border)

- robot position on that map (x,y,theta)

Example SLAM algorithms:

- Particle filter-based SLAM plus Rao-Blackwellization: model the robot’s path by sampling and compute the 'landmarks' given the poses

Example implementation: The idea is to use a particle filter that constantly generates a certain amount of 'guesses' (particles, e.g. N=50) where the robot can be (depending on the last position, control input, speed sensor and heading sensor measurements). Each 'particle' is evaluated by the perimeter field measurement...

... and the particle 'weight' is adjusted based on that. Bad particles are replaced by new particles with new guesses near the good guesses. This constantly produces particles with high probability of the robot's location.

SLAM simulation

- The simulator code can be found in SVN

- The simulator IDE+compiler+precompiled libs (CodeBlocks) can be downloaded here - Extract the .zip to 'C:\codeblocks' and run 'sim.cbp'

Videos:

Magnetic field localization using particle filter

Lane-by-lane mowing

In the lawn-by-lane mowing pattern, the robot uses a fixed course (accurate gyro + compass correction). When hitting an obstacle, the new course is added by 180 degree, so that the robot enters a new lane.

The robot always starts at the borders at an arbitrary choosen course, mowing lane by lane of a maximum length (distance determined by odometry). The maximum length ensures that the odometry position error does not get too high. At the perimeter, the robot can reduce the error to zero again (one axis). The arbitrary starting course ensures that even small gaps on the lawn are mown completely after a 2nd mowing session (where the robot started at another arbitrary course).

The maximum allowed yaw error (course error) can computed like this:

yaw error=arcsin(lane width error/lane length)

Assuming a lane length of 10m, and a lane width error of +/- 0.1m, the maximum allowed yaw error is:

yaw error=arcsin(0.1m/10m) = 0.5 degree

Assuming that robot moves at 0.4m/sec, the actual yaw error can be computed like this:

actual time needed = 10 meters / 0.4m/sec = 25 seconds

Actual yaw error (assuming gyro noise 0.005 degree/sec):

actual yaw error = 0.005 degree/sec * 25 seconds = 0.125 degree

Sensor logging

For PC data analysis, algorithm modelling and optimization, you can collect robot sensor data using pfodApp like this:

- Using your Android pfodApp, connect to your robot and choose 'Log sensors'. The logged sensor data will be displayed. Click 'Back' to stop logging (NOTE: for ArduRemote, press Android menu button before and choose 'Enable logging' to enable file logging).

- Connect your Android phone to the PC, if being asked on the phone choose 'Enable as USB device', so you phone shows as a new Windows drive on your PC.

- On your PC, launch Windows Explorer and choose the new Android drive, browse to the 'pfodAppRawData' folder (for ArduRemote: 'ArduRemote' folder), and copy the data file to your PC (you can identify files by their Bluetooth name and date).

Simulation

Here's [1] a simulation of localization using odometry sensors.